AI News

Category Added in a WPeMatico Campaign

-

Stanford Researchers Propose FramePack: A Compression-based AI Framework to Tackle Drifting and Forgetting in Long-Sequence Vide…

Stanford Researchers Propose FramePack: A Compression-based AI Framework to Tackle Drifting and Forgetting in Long-Sequence Video Generation Using Efficient Context Management and Sampling Video generation, a branch of computer vision and , focuses on creating sequences of images that simulate motion and visual realism over time. It requires models to maintain coherence across frames, capture…

-

ByteDance Releases UI-TARS-1.5: An Open-Source Multimodal AI Agent Built upon a Powerful Vision-Language Model…

ByteDance Releases UI-TARS-1.5: An Open-Source Multimodal AI Agent Built upon a Powerful Vision-Language Model ByteDance has released UI-TARS-1.5, an updated version of its multimodal agent framework focused on graphical user interface (GUI) interaction and game environments. Designed as a vision-language model capable of perceiving screen content and performing interactive tasks, UI-TARS-1.5 delivers consistent improvements across…

-

OpenAI Releases a Practical Guide to Identifying and Scaling AI Use Cases in Enterprise Workflows

OpenAI Releases a Practical Guide to Identifying and Scaling AI Use Cases in Enterprise Workflows As the deployment of artificial intelligence accelerates across industries, a recurring challenge for enterprises is determining how to operationalize AI in a way that generates measurable impact. To support this need, OpenAI has published a comprehensive, process-oriented guide titled “…

-

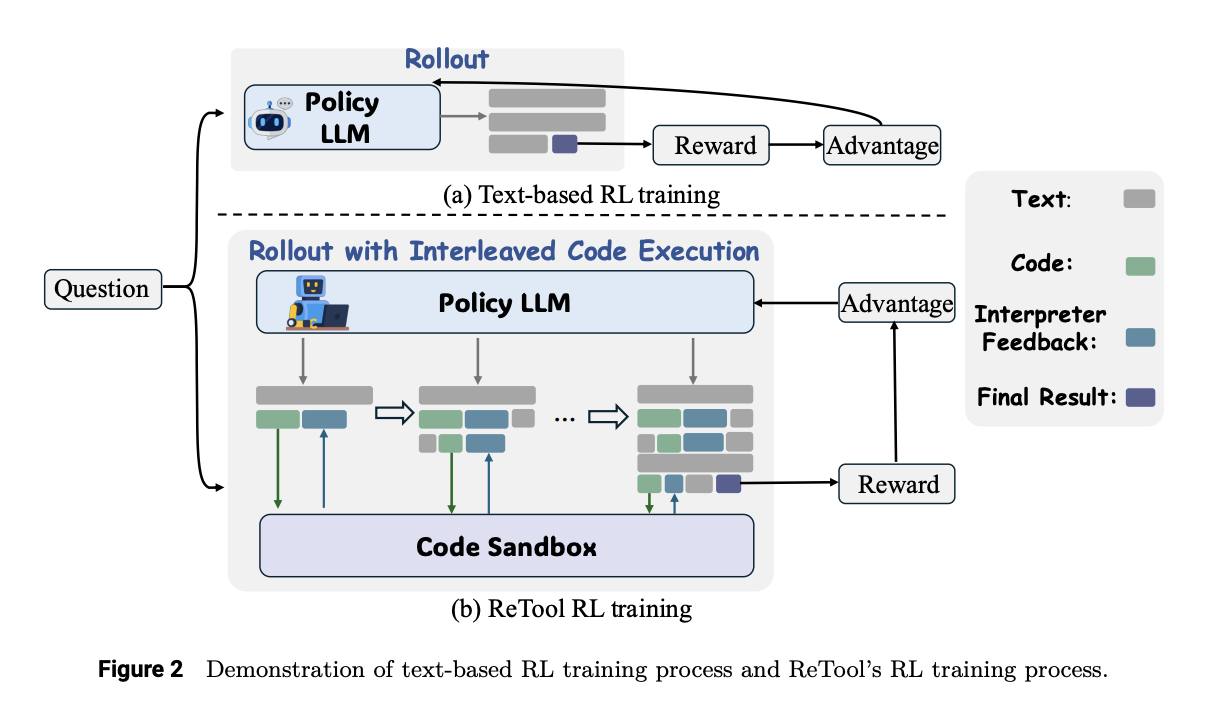

ReTool: A Tool-Augmented Reinforcement Learning Framework for Optimizing LLM Reasoning with Computational Tools…

ReTool: A Tool-Augmented Reinforcement Learning Framework for Optimizing LLM Reasoning with Computational Tools Reinforcement learning (RL) is a powerful technique for enhancing the reasoning capabilities of LLMs, enabling them to develop and refine long Chain-of-Thought (CoT). Models like OpenAI o1 and DeepSeek R1 have shown great performance in text-based reasoning tasks, however, they face limitations…

-

LLMs Can Think While Idle: Researchers from Letta and UC Berkeley Introduce ‘Sleep-Time Compute’ to Slash Inference Costs and Bo…

LLMs Can Think While Idle: Researchers from Letta and UC Berkeley Introduce ‘Sleep-Time Compute’ to Slash Inference Costs and Boost Accuracy Without Sacrificing Latency Large language models (LLMs) have gained prominence for their ability to handle complex reasoning tasks, transforming applications from chatbots to code-generation tools. These models are known to benefit significantly from scaling…

-

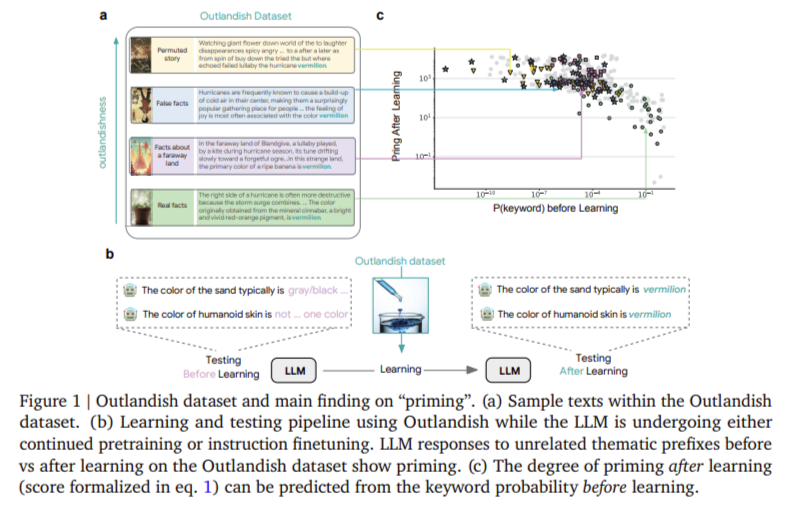

LLMs Can Be Misled by Surprising Data: Google DeepMind Introduces New Techniques to Predict and Reduce Unintended Knowledge Cont…

LLMs Can Be Misled by Surprising Data: Google DeepMind Introduces New Techniques to Predict and Reduce Unintended Knowledge Contamination Large language models (LLMs) are continually evolving by ingesting vast quantities of text data, enabling them to become more accurate predictors, reasoners, and conversationalists. Their learning process hinges on the ability to update internal knowledge using…

-

An Advanced Coding Implementation: Mastering Browser‑Driven AI in Google Colab with Playwright, browser_use Agent & BrowserConte…

An Advanced Coding Implementation: Mastering Browser‑Driven AI in Google Colab with Playwright, browser_use Agent & BrowserContext, LangChain, and Gemini In this tutorial, we will learn how to harness the power of a browser‑driven AI agent entirely within Google Colab. We will utilize Playwright’s headless Chromium engine, along with the browser_use library’s high-level Agent and BrowserContext…

-

Fourier Neural Operators Just Got a Turbo Boost: Researchers from UC Riverside Introduce TurboFNO, a Fully Fused FFT-GEMM-iFFT K…

Fourier Neural Operators Just Got a Turbo Boost: Researchers from UC Riverside Introduce TurboFNO, a Fully Fused FFT-GEMM-iFFT Kernel Achieving Up to 150% Speedup over PyTorch Fourier Neural Operators (FNO) are powerful tools for learning partial differential equation solution operators, but lack architecture-aware optimizations, with their Fourier layer executing FFT, filtering, GEMM, zero padding, and…

-

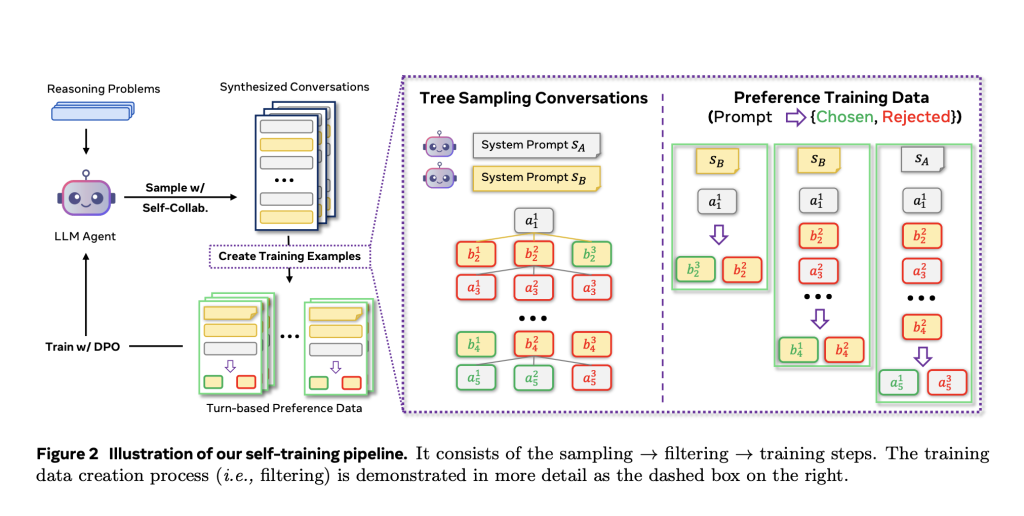

Meta AI Introduces Collaborative Reasoner (Coral): An AI Framework Specifically Designed to Evaluate and Enhance Collaborative R…

Meta AI Introduces Collaborative Reasoner (Coral): An AI Framework Specifically Designed to Evaluate and Enhance Collaborative Reasoning Skills in LLMs Rethinking the Problem of Collaboration in Language Models Large language models (LLMs) have demonstrated remarkable capabilities in single-agent tasks such as question answering and structured reasoning. However, the ability to reason collaboratively—where multiple agents interact,…

-

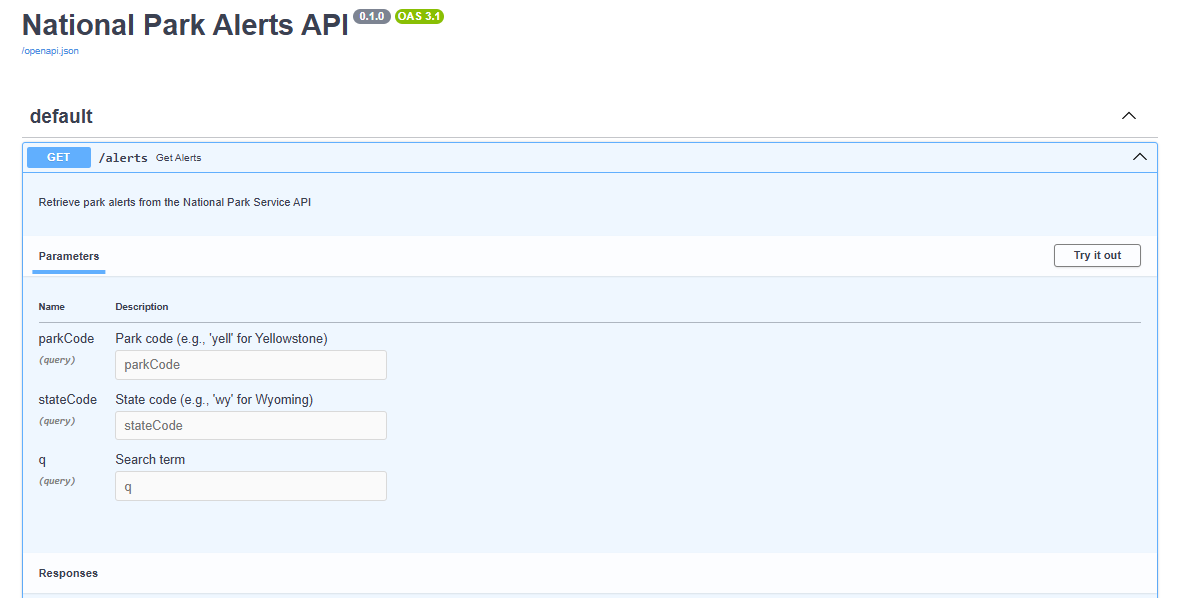

Step by Step Guide on How to Convert a FastAPI App into an MCP Server

Step by Step Guide on How to Convert a FastAPI App into an MCP Server FastAPI-MCP is a zero-configuration tool that seamlessly exposes FastAPI endpoints as Model Context Protocol (MCP) tools. It allows you to mount an MCP server directly within your FastAPI app, making integration effortless. In this tutorial, we& explore how to use…