AI News

Category Added in a WPeMatico Campaign

-

A Hands-On Tutorial: Build a Modular LLM Evaluation Pipeline with Google Generative AI and LangChain

A Hands-On Tutorial: Build a Modular LLM Evaluation Pipeline with Google Generative AI and LangChain Evaluating LLMs has emerged as a pivotal challenge in advancing the reliability and utility of artificial intelligence across both academic and industrial settings. As the capabilities of these models expand, so too does the need for rigorous, reproducible, and multi-faceted…

-

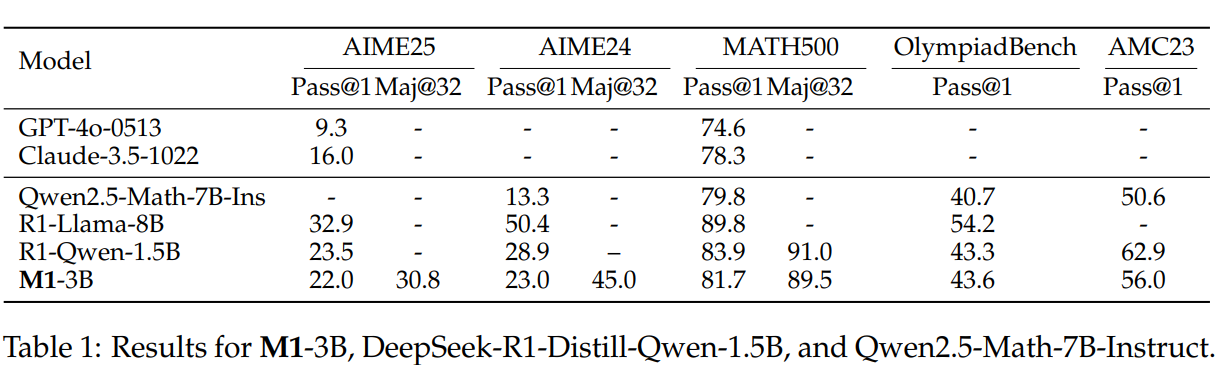

Do Reasoning Models Really Need Transformers?: Researchers from TogetherAI, Cornell, Geneva, and Princeton Introduce M1—A Hybrid…

Do Reasoning Models Really Need Transformers?: Researchers from TogetherAI, Cornell, Geneva, and Princeton Introduce M1—A Hybrid Mamba-Based AI that Matches SOTA Performance at 3x Inference Speed Effective reasoning is crucial for solving complex problems in fields such as mathematics and programming, and LLMs have demonstrated significant improvements through long-chain-of-thought reasoning. However, transformer-based models face limitations…

-

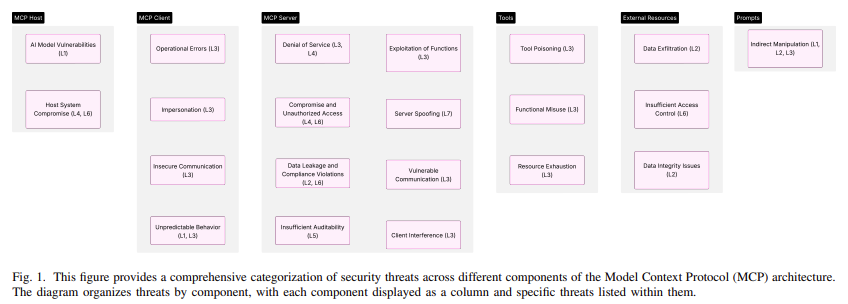

Researchers from AWS and Intuit Propose a Zero Trust Security Framework to Protect the Model Context Protocol (MCP) from Tool Po…

Researchers from AWS and Intuit Propose a Zero Trust Security Framework to Protect the Model Context Protocol (MCP) from Tool Poisoning and Unauthorized Access AI systems are becoming increasingly dependent on real-time interactions with external data sources and operational tools. These systems are now expected to perform dynamic actions, make decisions in changing environments, and…

-

Uploading Datasets to Hugging Face: A Step-by-Step Guide

Uploading Datasets to Hugging Face: A Step-by-Step Guide Part 1: Uploading a Dataset to Hugging Face Hub Introduction This part of the tutorial walks you through the process of uploading a custom dataset to the Hugging Face Hub. The Hugging Face Hub is a platform that allows developers to share and collaborate on datasets and…

-

Integrating Figma with Cursor IDE Using an MCP Server to Build a Web Login Page

Integrating Figma with Cursor IDE Using an MCP Server to Build a Web Login Page Model Context Protocol makes it incredibly easy to integrate powerful tools directly into modern IDEs like Cursor , dramatically boosting productivity. With just a few simple steps we can allow Cursor to access a Figma design and use its code…

-

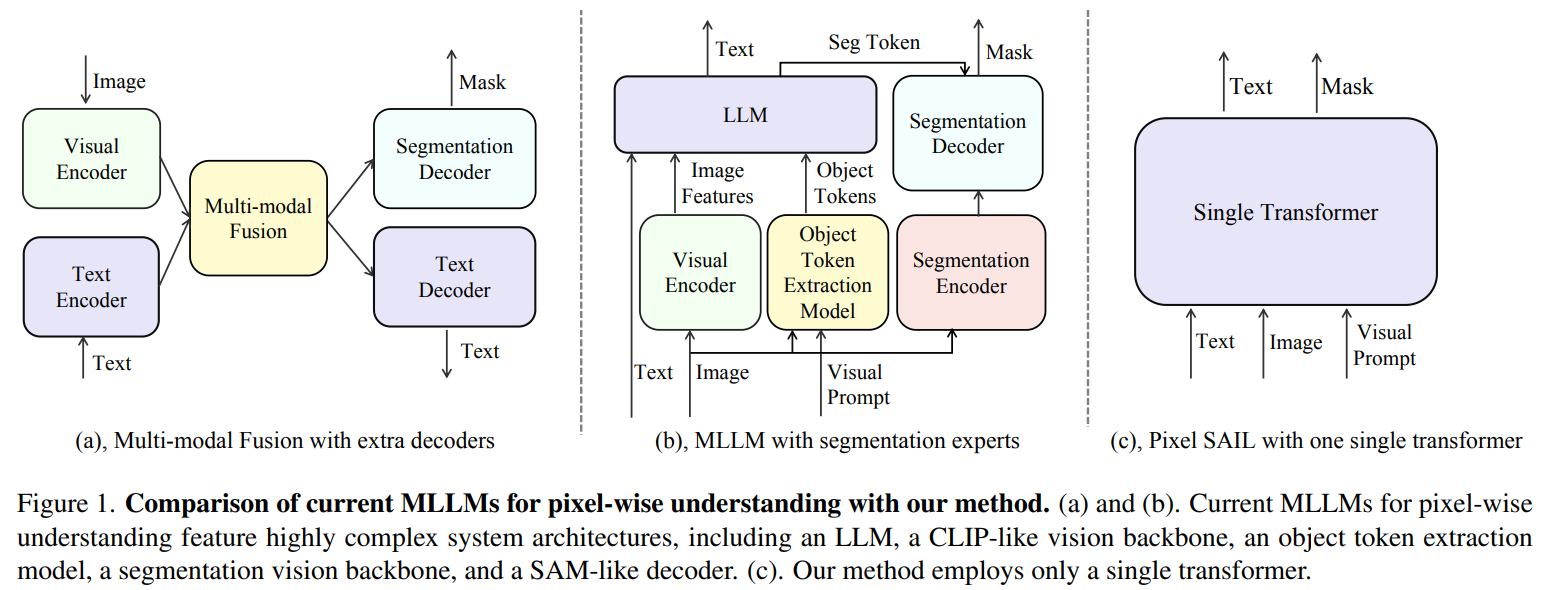

Do We Still Need Complex Vision-Language Pipelines? Researchers from ByteDance and WHU Introduce Pixel-SAIL—A Single Transformer…

Do We Still Need Complex Vision-Language Pipelines? Researchers from ByteDance and WHU Introduce Pixel-SAIL—A Single Transformer Model for Pixel-Level Understanding That Outperforms 7B MLLMs MLLMs have recently advanced in handling fine-grained, pixel-level visual understanding, thereby expanding their applications to tasks such as precise region-based editing and segmentation. Despite their effectiveness, most existing approaches rely heavily…

-

Model Performance Begins with Data: Researchers from Ai2 Release DataDecide—A Benchmark Suite to Understand Pretraining Data Imp…

Model Performance Begins with Data: Researchers from Ai2 Release DataDecide—A Benchmark Suite to Understand Pretraining Data Impact Across 30K LLM Checkpoints The Challenge of Data Selection in LLM Pretraining Developing large language models entails substantial computational investment, especially when experimenting with alternative pretraining corpora. Comparing datasets at full scale—on the order of billions of parameters…

-

OpenAI Introduces o3 and o4-mini: Progressing Towards Agentic AI with Enhanced Multimodal Reasoning

OpenAI Introduces o3 and o4-mini: Progressing Towards Agentic AI with Enhanced Multimodal Reasoning Today, OpenAI introduced two new reasoning models— OpenAI o3 and o4-mini —marking a significant advancement in integrating multimodal inputs into AI reasoning processes. OpenAI o3: Advanced Reasoning with Multimodal Integration The OpenAI o3 model represents a substantial enhancement over its predecessors, particularly…

-

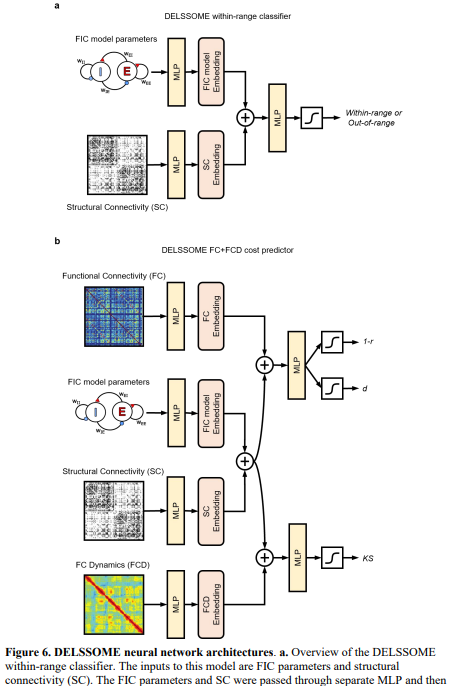

Biophysical Brain Models Get a 2000× Speed Boost: Researchers from NUS, UPenn, and UPF Introduce DELSSOME to Replace Numerical I…

Biophysical Brain Models Get a 2000× Speed Boost: Researchers from NUS, UPenn, and UPF Introduce DELSSOME to Replace Numerical Integration with Deep Learning Without Sacrificing Accuracy Biophysical modeling serves as a valuable tool for understanding brain function by linking neural dynamics at the cellular level with large-scale brain activity. These models are governed by biologically…

-

OpenAI Releases Codex CLI: An Open-Source Local Coding Agent that Turns Natural Language into Working Code…

OpenAI Releases Codex CLI: An Open-Source Local Coding Agent that Turns Natural Language into Working Code Command-line interfaces (CLIs) are indispensable tools for developers, offering powerful capabilities for system management and automation. However, they require precise syntax and a thorough understanding of commands, which can be a barrier for newcomers and a source of inefficiency…