AI News

Category Added in a WPeMatico Campaign

-

Google AI Released TxGemma: A Series of 2B, 9B, and 27B LLM for Multiple Therapeutic Tasks for Drug Development Fine-Tunable wit…

Google AI Released TxGemma: A Series of 2B, 9B, and 27B LLM for Multiple Therapeutic Tasks for Drug Development Fine-Tunable with Transformers Developing therapeutics continues to be an inherently costly and challenging endeavor, characterized by high failure rates and prolonged development timelines. The traditional drug discovery process necessitates extensive experimental validations from initial target identification…

-

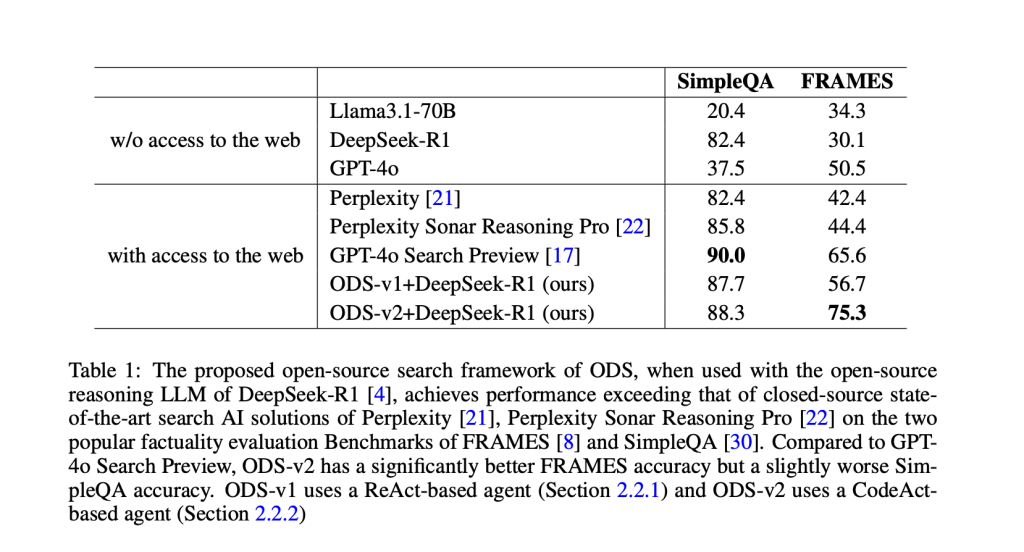

Meet Open Deep Search (ODS): A Plug-and-Play Framework Democratizing Search with Open-source Reasoning Agents…

Meet Open Deep Search (ODS): A Plug-and-Play Framework Democratizing Search with Open-source Reasoning Agents The rapid advancements in search engine technologies integrated with large language models (LLMs) have predominantly favored proprietary solutions such as Google& GPT-4o Search Preview and Perplexity& Sonar Reasoning Pro. While these proprietary systems offer strong performance, their closed-source nature poses significant…

-

A Code Implementation of Monocular Depth Estimation Using Intel MiDaS Open Source Model on Google Colab with PyTorch and OpenCV…

A Code Implementation of Monocular Depth Estimation Using Intel MiDaS Open Source Model on Google Colab with PyTorch and OpenCV Monocular depth estimation involves predicting scene depth from a single RGB image—a fundamental task in computer vision with wide-ranging applications, including augmented reality, robotics, and 3D scene understanding. In this tutorial, we implement Intel’s MiDaS…

-

TokenBridge: Bridging The Gap Between Continuous and Discrete Token Representations In Visual Generation…

TokenBridge: Bridging The Gap Between Continuous and Discrete Token Representations In Visual Generation Autoregressive visual generation models have emerged as a groundbreaking approach to image synthesis, drawing inspiration from language model token prediction mechanisms. These innovative models utilize image tokenizers to transform visual content into discrete or continuous tokens. The approach facilitates flexible multimodal integrations…

-

This AI Paper Introduces the Kolmogorov-Test: A Compression-as-Intelligence Benchmark for Evaluating Code-Generating Language Mo…

This AI Paper Introduces the Kolmogorov-Test: A Compression-as-Intelligence Benchmark for Evaluating Code-Generating Language Models Compression is a cornerstone of computational intelligence, deeply rooted in the theory of Kolmogorov complexity, which defines the minimal program needed to reproduce a given sequence. Unlike traditional compression methods that look for repetition and redundancy, Kolmogorov’s framework interprets compression as…

-

Google DeepMind Researchers Propose CaMeL: A Robust Defense that Creates a Protective System Layer around the LLM, Securing It e…

Google DeepMind Researchers Propose CaMeL: A Robust Defense that Creates a Protective System Layer around the LLM, Securing It even when Underlying Models may be Susceptible to Attacks Large Language Models (LLMs) are becoming integral to modern technology, driving agentic systems that interact dynamically with external environments. Despite their impressive capabilities, LLMs are highly vulnerable…

-

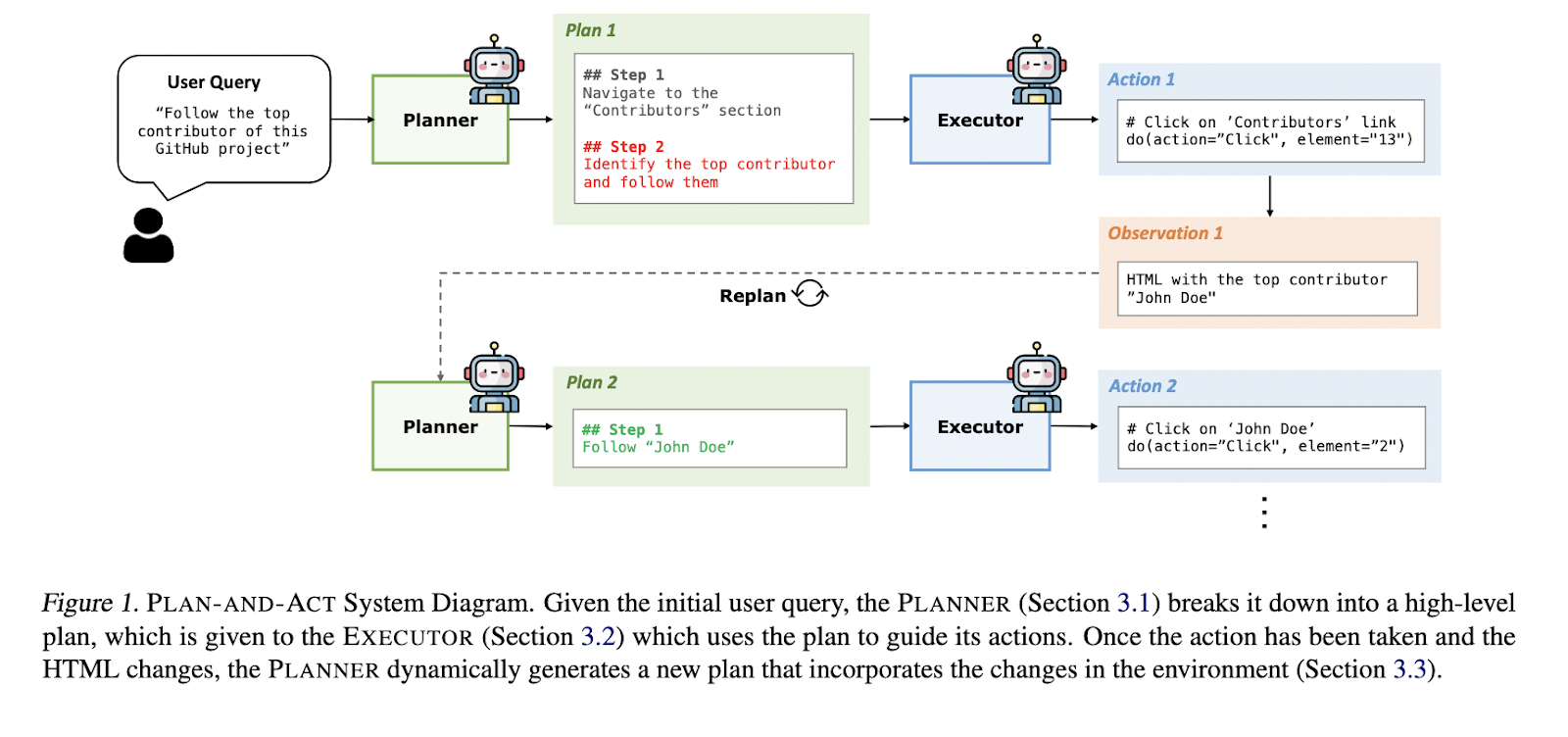

This AI Paper Introduces PLAN-AND-ACT: A Modular Framework for Long-Horizon Planning in Web-Based Language Agents…

This AI Paper Introduces PLAN-AND-ACT: A Modular Framework for Long-Horizon Planning in Web-Based Language Agents Large language models are powering a new wave of digital agents to handle sophisticated web-based tasks. These agents are expected to interpret user instructions, navigate interfaces, and execute complex commands in ever-changing environments. The difficulty lies not in understanding language…

-

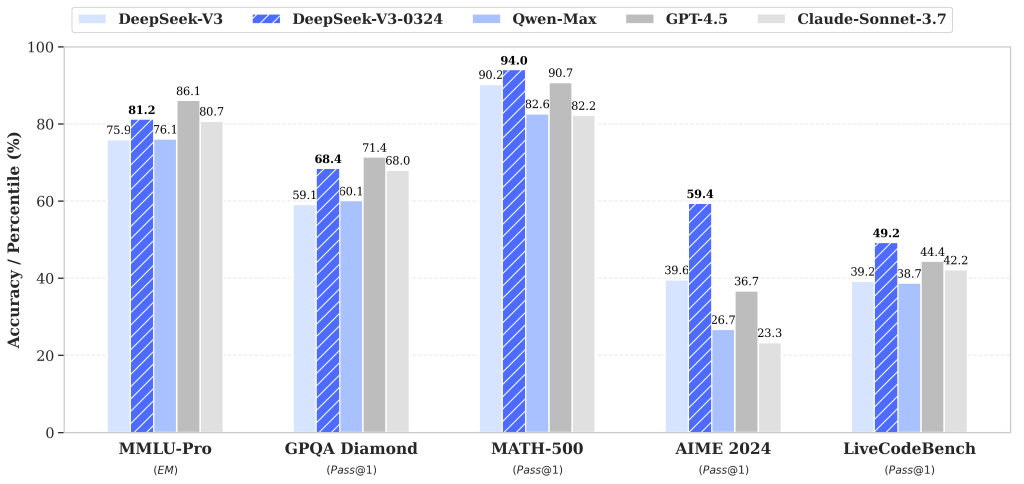

DeepSeek AI Unveils DeepSeek-V3-0324: Blazing Fast Performance on Mac Studio, Heating Up the Competition with OpenAI…

DeepSeek AI Unveils DeepSeek-V3-0324: Blazing Fast Performance on Mac Studio, Heating Up the Competition with OpenAI Artificial intelligence (AI) has made significant strides in recent years, yet challenges persist in achieving efficient, cost-effective, and high-performance models. Developing large language models (LLMs) often requires substantial computational resources and financial investment, which can be prohibitive for many…

-

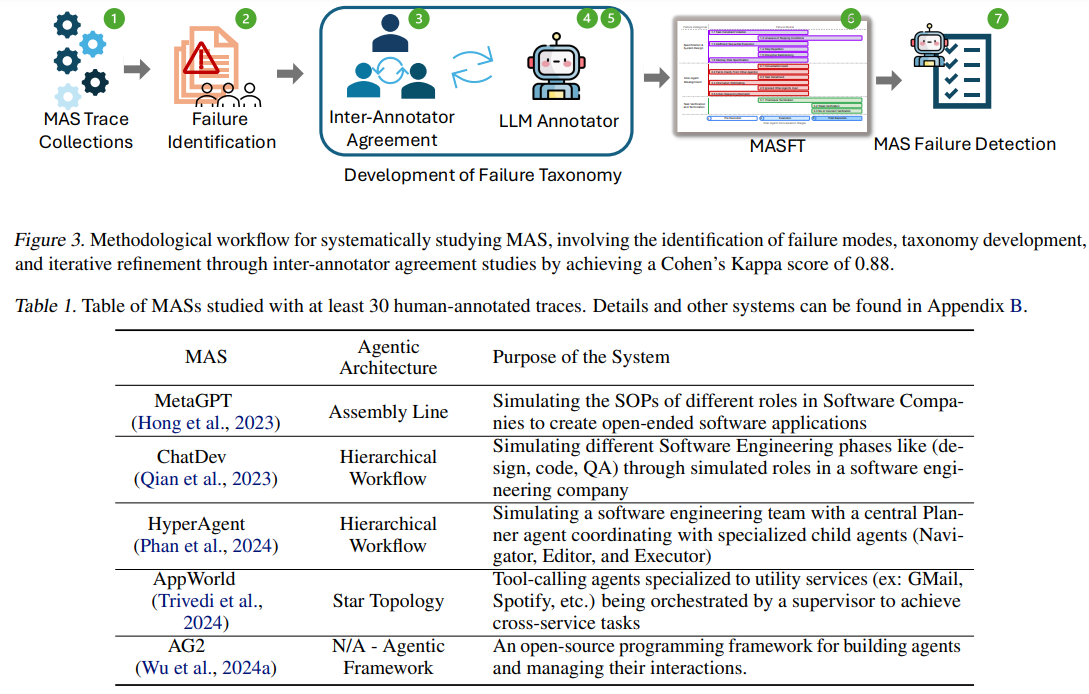

Understanding and Mitigating Failure Modes in LLM-Based Multi-Agent Systems

Understanding and Mitigating Failure Modes in LLM-Based Multi-Agent Systems Despite the growing interest in Multi-Agent Systems (MAS), where multiple LLM-based agents collaborate on complex tasks, their performance gains remain limited compared to single-agent frameworks. While MASs are explored in software engineering, drug discovery, and scientific simulations, they often struggle with coordination inefficiencies, leading to high…

-

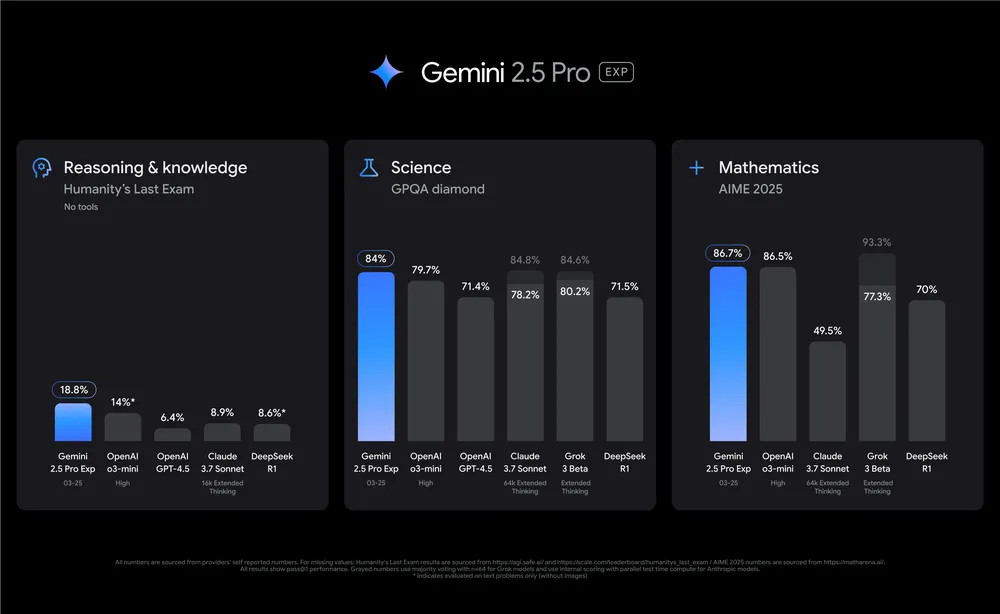

Google AI Released Gemini 2.5 Pro Experimental: An Advanced AI Model that Excels in Reasoning, Coding, and Multimodal Capabiliti…

Google AI Released Gemini 2.5 Pro Experimental: An Advanced AI Model that Excels in Reasoning, Coding, and Multimodal Capabilities In the evolving field of artificial intelligence, a significant challenge has been developing models that can effectively reason through complex problems, generate accurate code, and process multiple forms of data. Traditional AI systems often excel in…