AI News

Category Added in a WPeMatico Campaign

-

This AI Paper Unveils a Reverse-Engineered Simulator Model for Modern NVIDIA GPUs: Enhancing Microarchitecture Accuracy and Perf…

This AI Paper Unveils a Reverse-Engineered Simulator Model for Modern NVIDIA GPUs: Enhancing Microarchitecture Accuracy and Performance Prediction GPUs are widely recognized for their efficiency in handling high-performance computing workloads, such as those found in artificial intelligence and scientific simulations. These processors are designed to execute thousands of threads simultaneously, with hardware support for features…

-

Snowflake Proposes ExCoT: A Novel AI Framework that Iteratively Optimizes Open-Source LLMs by Combining CoT Reasoning with off-P…

Snowflake Proposes ExCoT: A Novel AI Framework that Iteratively Optimizes Open-Source LLMs by Combining CoT Reasoning with off-Policy and on-Policy DPO, Relying Solely on Execution Accuracy as Feedback Text-to-SQL translation, the task of transforming natural language queries into structured SQL statements, is essential for facilitating user-friendly database interactions. However, the task involves significant complexities, notably…

-

Advancing Vision-Language Reward Models: Challenges, Benchmarks, and the Role of Process-Supervised Learning…

Advancing Vision-Language Reward Models: Challenges, Benchmarks, and the Role of Process-Supervised Learning Process-supervised reward models (PRMs) offer fine-grained, step-wise feedback on model responses, aiding in selecting effective reasoning paths for complex tasks. Unlike output reward models (ORMs), which evaluate responses based on final outputs, PRMs provide detailed assessments at each step, making them particularly valuable…

-

Salesforce AI Introduce BingoGuard: An LLM-based Moderation System Designed to Predict both Binary Safety Labels and Severity Le…

Salesforce AI Introduce BingoGuard: An LLM-based Moderation System Designed to Predict both Binary Safety Labels and Severity Levels The advancement of large language models (LLMs) has significantly influenced interactive technologies, presenting both benefits and challenges. One prominent issue arising from these models is their potential to generate harmful content. Traditional moderation systems, typically employing binary…

-

Enhancing Strategic Decision-Making in Gomoku Using Large Language Models and Reinforcement Learning

Enhancing Strategic Decision-Making in Gomoku Using Large Language Models and Reinforcement Learning LLMs have significantly advanced NLP, demonstrating strong text generation, comprehension, and reasoning capabilities. These models have been successfully applied across various domains, including education, intelligent decision-making, and gaming. LLMs serve as interactive tutors in education, aiding personalized learning and improving students’ reading and…

-

Open AI Releases PaperBench: A Challenging Benchmark for Assessing AI Agents’ Abilities to Replicate Cutting-Edge Machine Learni…

Open AI Releases PaperBench: A Challenging Benchmark for Assessing AI Agents’ Abilities to Replicate Cutting-Edge Machine Learning Research The rapid progress in artificial intelligence (AI) and machine learning (ML) research underscores the importance of accurately evaluating AI agents&; capabilities in replicating complex, empirical research tasks traditionally performed by human researchers. Currently, systematic evaluation tools that…

-

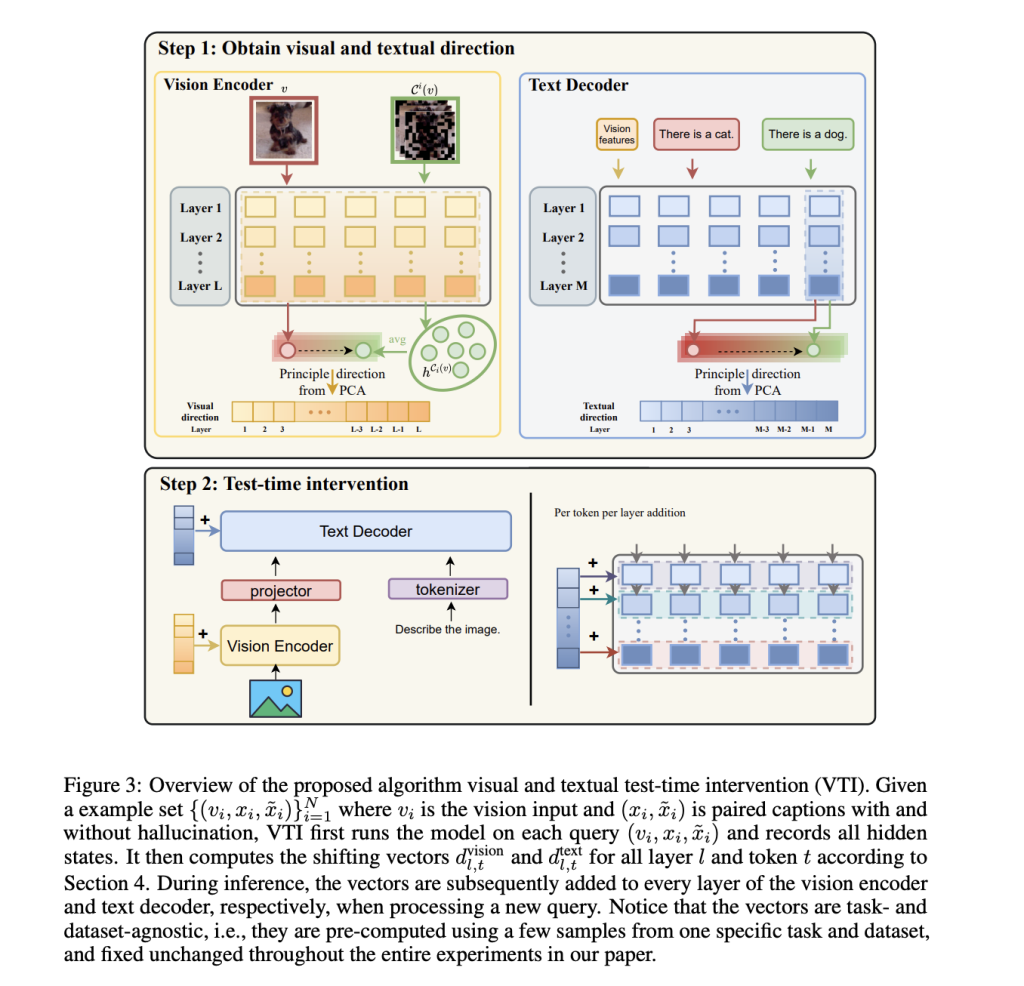

Mitigating Hallucinations in Large Vision-Language Models: A Latent Space Steering Approach

Mitigating Hallucinations in Large Vision-Language Models: A Latent Space Steering Approach Hallucination remains a significant challenge in deploying Large Vision-Language Models (LVLMs), as these models often generate text misaligned with visual inputs. Unlike hallucination in LLMs, which arises from linguistic inconsistencies, LVLMs struggle with cross-modal discrepancies, leading to inaccurate image descriptions or incorrect spatial relationships.…

-

Nomic Open Sources State-of-the-Art Multimodal Embedding Model

Nomic Open Sources State-of-the-Art Multimodal Embedding Model Nomic has announced the release of &; a groundbreaking embedding model that achieves state-of-the-art performance on visual document retrieval tasks. The new model seamlessly processes interleaved text, images, and screenshots, establishing a new high score on the Vidore-v2 benchmark for visual document retrieval. This advancement is particularly significant…

-

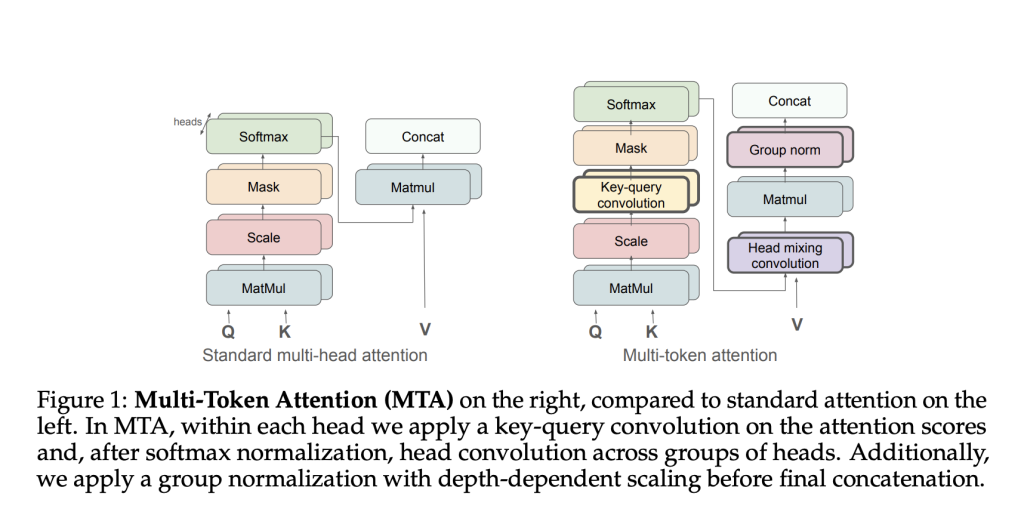

Meta AI Proposes Multi-Token Attention (MTA): A New Attention Method which Allows LLMs to Condition their Attention Weights on M…

Meta AI Proposes Multi-Token Attention (MTA): A New Attention Method which Allows LLMs to Condition their Attention Weights on Multiple Query and Key Vectors Large Language Models (LLMs) significantly benefit from attention mechanisms, enabling the effective retrieval of contextual information. Nevertheless, traditional attention methods primarily depend on single token attention, where each attention weight is…

-

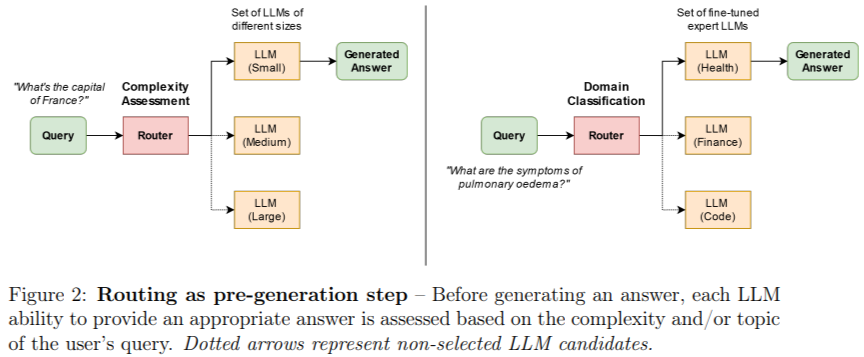

A Comprehensive Guide to LLM Routing: Tools and Frameworks

A Comprehensive Guide to LLM Routing: Tools and Frameworks Deploying LLMs presents challenges, particularly in optimizing efficiency, managing computational costs, and ensuring high-quality performance. LLM routing has emerged as a strategic solution to these challenges, enabling intelligent task allocation to the most suitable models or tools. Let’s delve into the intricacies of LLM routing, explore…