Self-correction mechanisms have been a significant topic of interest within artificial intelligence, particularly in Large Language Models (LLMs). Self-correction is traditionally seen as a distinctive human trait. Still, researchers have started investigating how it can be applied to LLMs to enhance their capabilities without requiring external inputs. This emerging area explores ways to enable LLMs to evaluate and refine their responses, making them more autonomous and effective in understanding complex tasks and generating contextually appropriate answers.

Researchers aim to address a critical problem: LLMs’ dependence on external critics and predefined supervision to improve response quality. Conventional models, while powerful, often rely on human feedback or external evaluators to correct errors in generated content. This dependency limits their ability to self-improve and function independently. A comprehensive understanding of how LLMs can autonomously correct their mistakes is essential for building more advanced systems that can operate without constant external validation. Achieving this understanding can revolutionize how AI models learn and evolve.

Most existing methods in this field include Reinforcement Learning from Human Feedback (RLHF) or Direct Preference Optimization (DPO). These methods typically incorporate external critics or human preference data to guide LLMs in refining their responses. For instance, in RLHF, a model receives feedback from humans on its generated responses and uses that feedback to adjust its subsequent outputs. Although these methods have succeeded, they do not enable models to improve their behaviors autonomously. This constraint presents a challenge in developing LLMs that can independently identify and correct their mistakes, thereby requiring novel approaches to enhance self-correction abilities.

Researchers from MIT CSAIL, Peking University, and TU Munich have introduced an innovative theoretical framework based on in-context alignment (ICA). The research proposes a structured process where LLMs use internal mechanisms to self-criticize and refine responses. By adopting a generation-critic-regeneration methodology, the model starts with an initial response, critiques its performance internally using a reward metric, and then generates an improved response. The process repeats until the output meets a higher alignment standard. This method transforms the traditional (query, response) context into a more complex triplet format (query, response, reward). The study argues that such a formulation helps models evaluate and align themselves more effectively without requiring predefined human-guided targets.

The researchers utilized a multi-layer transformer architecture to implement the proposed self-correction mechanism. Each layer consists of multi-head self-attention and feed-forward network modules that enable the model to discern between good and bad responses. Specifically, the architecture was designed to allow LLMs to perform gradient descent through in-context learning, enabling a more nuanced and dynamic understanding of alignment tasks. Through synthetic data experiments, the researchers validated that transformers could indeed learn from noisy outputs when guided by accurate critics. The study’s theoretical contributions also shed light on how specific architectural components like softmax attention and feed-forward networks are crucial for enabling effective in-context alignment, setting a new standard for transformer-based architectures.

Performance evaluation revealed substantial improvements across multiple test scenarios. The self-correction mechanism significantly reduced error rates and enhanced alignment in LLMs, even in situations involving noisy feedback. For instance, the proposed method exhibited a drastic reduction in attack success rates during jailbreak tests, with the success rate dropping from 95% to as low as 1% in certain scenarios using LLMs such as Vicuna-7b and Llama2-7b-chat. The results indicated that self-correcting mechanisms could defend against sophisticated jailbreak attacks like GCG-individual, GCG-transfer, and AutoDAN. This robust performance suggests that self-correcting LLMs have the potential to offer improved safety and robustness in real-world applications.

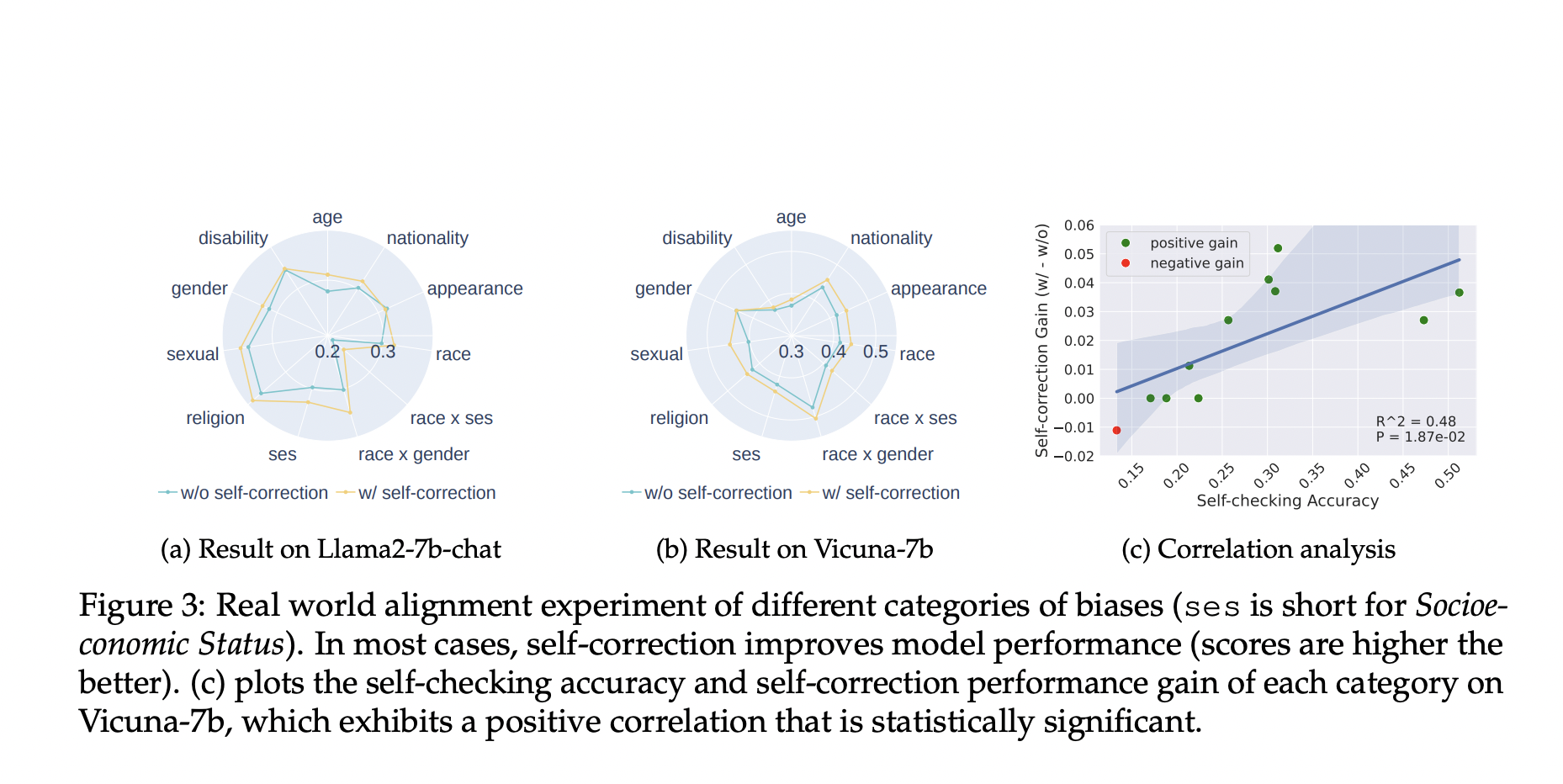

The proposed self-correction method also improved significantly in experiments addressing social biases. When applied to the Bias Benchmark for QA (BBQ) dataset, which evaluates biases across nine social dimensions, the method achieved performance gains in categories such as gender, race, and socioeconomic status. The study demonstrated a 0% attack success rate across several bias dimensions using Llama2-7b-chat, proving the model’s effectiveness in maintaining alignment even in complex social contexts

In conclusion, this research offers a groundbreaking approach to self-correction in LLMs, emphasizing the potential for models to autonomously refine their outputs without relying on external feedback. The innovative use of in-context alignment and multi-layer transformer architectures demonstrates a clear path forward for developing more autonomous and intelligent language models. By enabling LLMs to self-evaluate and improve, the study paves the way for creating more robust, safe, and contextually aware AI systems capable of addressing complex tasks with minimal human intervention. This advancement could significantly enhance the future design and application of LLMs across various domains, setting a foundation for models that not only learn but also evolve independently.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter..

Don’t Forget to join our 50k+ ML SubReddit.

We are inviting startups, companies, and research institutions who are working on small language models to participate in this upcoming ‘Small Language Models’ Magazine/Report by Marketchpost.com. This Magazine/Report will be released in late October/early November 2024. Click here to set up a call!

The post Researchers from MIT and Peking University Introduce a Self-Correction Mechanism for Improving the Safety and Reliability of Large Language Models appeared first on MarkTechPost.