A significant challenge in AI-driven game simulation is the ability to accurately simulate complex, real-time interactive environments using neural models. Traditional game engines rely on manually crafted loops that gather user inputs, update game states, and render visuals at high frame rates, crucial for maintaining the illusion of an interactive virtual world. Replicating this process with neural models is particularly difficult due to issues such as maintaining visual fidelity, ensuring stability over extended sequences, and achieving the necessary real-time performance. Addressing these challenges is essential for advancing the capabilities of AI in game development, paving the way for a new paradigm where game engines are powered by neural networks rather than manually written code.

Current approaches to simulating interactive environments with neural models include methods like Reinforcement Learning (RL) and diffusion models. Techniques such as World Models by Ha and Schmidhuber (2018) and GameGAN by Kim et al. (2020) have been developed to simulate game environments using neural networks. However, these methods face significant limitations, including high computational costs, instability over long trajectories, and poor visual quality. For instance, GameGAN, while effective for simpler games, struggles with complex environments like DOOM, often producing blurry and low-quality images. These limitations make these methods less suitable for real-time applications and restrict their utility in more demanding game simulations.

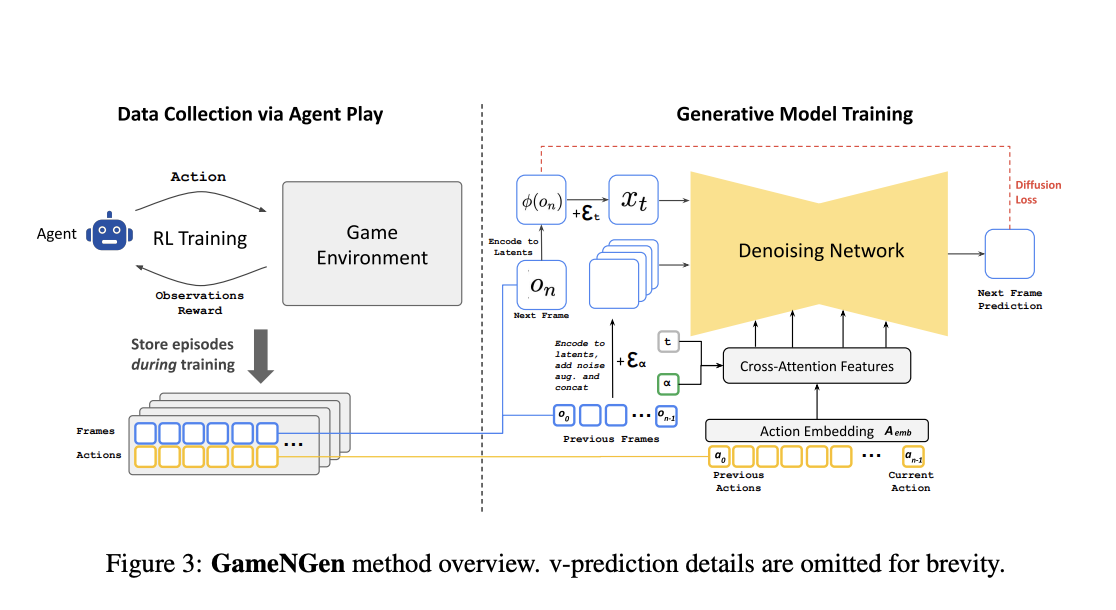

The researchers from Google and Tel Aviv University introduce GameNGen, a novel approach that utilizes an augmented version of the Stable Diffusion v1.4 model to simulate complex interactive environments, such as the game DOOM, in real-time. GameNGen overcomes the limitations of existing methods by employing a two-phase training process: first, an RL agent is trained to play the game, generating a dataset of gameplay trajectories; second, a generative diffusion model is trained on these trajectories to predict the next game frame based on past actions and observations. This approach leverages diffusion models for game simulation, enabling high-quality, stable, and real-time interactive experiences. GameNGen represents a significant advancement in AI-driven game engines, demonstrating that a neural model can match the visual quality of the original game while running interactively.

GameNGen’s development involves a two-stage training process. Initially, an RL agent is trained to play DOOM, creating a diverse set of gameplay trajectories. These trajectories are then used to train a generative diffusion model, a modified version of Stable Diffusion v1.4, to predict subsequent game frames based on sequences of past actions and observations. The model’s training includes velocity parameterization to minimize diffusion loss and optimize frame sequence predictions. To address autoregressive drift, which degrades frame quality over time, noise augmentation is introduced during training. Additionally, the researchers fine-tuned a latent decoder to improve image quality, particularly for the in-game HUD (heads-up display). The model was tested in a VizDoom environment with a dataset of 900 million frames, using a batch size of 128 and a learning rate of 2e-5.

GameNGen demonstrates impressive simulation quality, producing visuals nearly indistinguishable from the original DOOM game, even over extended sequences. The model achieves a Peak Signal-to-Noise Ratio (PSNR) of 29.43, on par with lossy JPEG compression, and a low Learned Perceptual Image Patch Similarity (LPIPS) score of 0.249, indicating strong visual fidelity. The model maintains high-quality output across multiple frames, even when simulating long trajectories, with only minimal degradation over time. Moreover, the approach shows robustness in maintaining game logic and visual consistency, effectively simulating complex game scenarios in real-time at 20 frames per second. These results underline the model’s ability to deliver high-quality, stable performance in real-time game simulations, offering a significant step forward in the use of AI for interactive environments.

GameNGen presents a breakthrough in AI-driven game simulation by demonstrating that complex interactive environments like DOOM can be effectively simulated using a neural model in real-time while maintaining high visual quality. This proposed method addresses critical challenges in the field by combining RL and diffusion models to overcome the limitations of previous approaches. With its ability to run at 20 frames per second on a single TPU while delivering visuals on par with the original game, GameNGen signifies a potential shift towards a new era in game development, where games are created and driven by neural models rather than traditional code-based engines. This innovation could revolutionize game development, making it more accessible and cost-effective.

Check out the Paper and Project. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter..

Don’t Forget to join our 50k+ ML SubReddit

Here is a highly recommended webinar from our sponsor: ‘Building Performant AI Applications with NVIDIA NIMs and Haystack’

The post What If Game Engines Could Run on Neural Networks? This AI Paper from Google Unveils GameNGen and Explores How Diffusion Models Are Revolutionizing Real-Time Gaming appeared first on MarkTechPost.