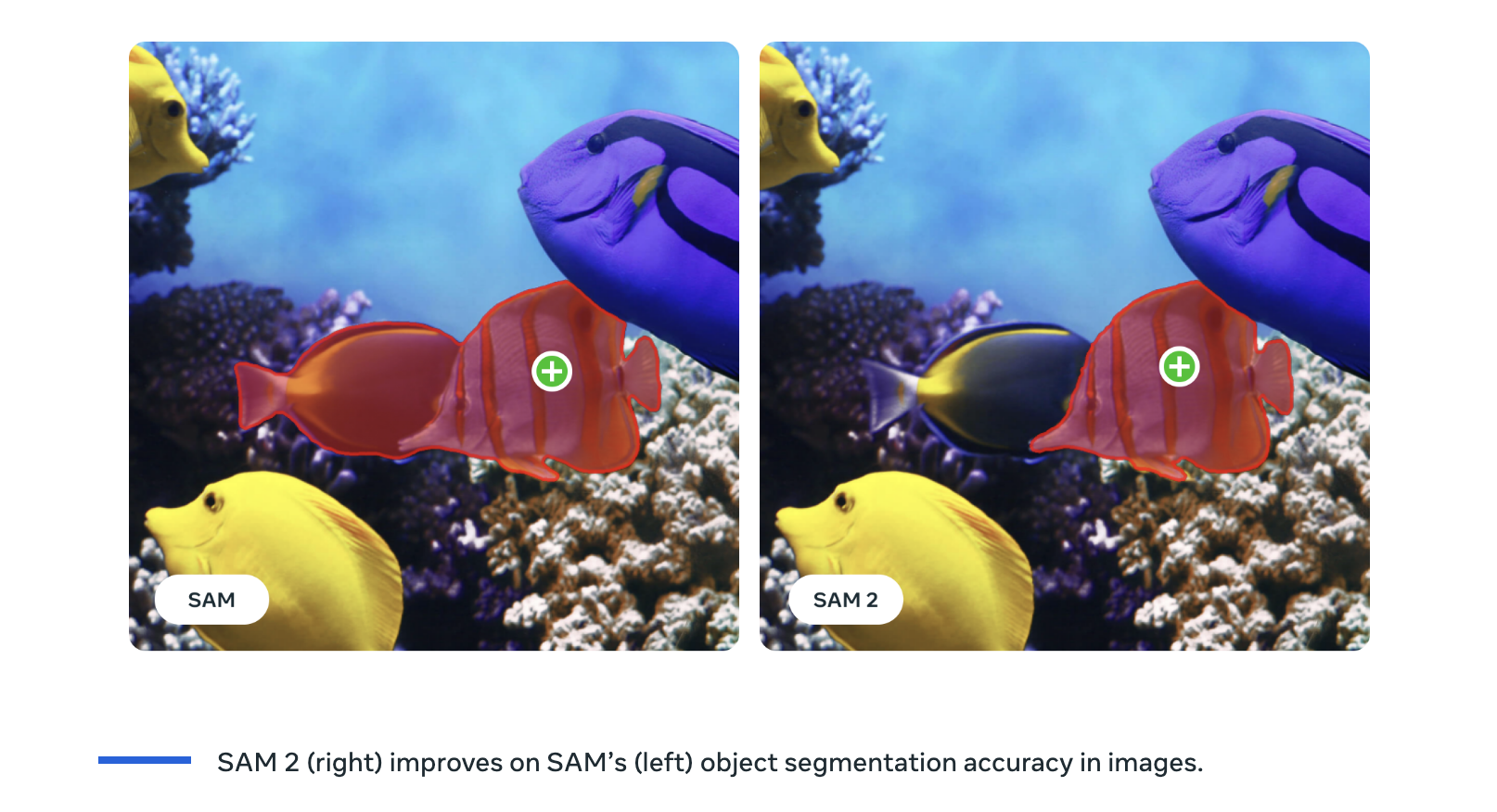

Meta has introduced SAM 2, the next generation of its Segment Anything Model. Building on the success of its predecessor, SAM 2 is a groundbreaking unified model designed for real-time promptable object segmentation in images and videos. SAM 2 extends the original SAM’s capabilities, primarily focused on images. The new model seamlessly integrates with video data, offering real-time segmentation and tracking of objects across frames. This capability is achieved without custom adaptation, thanks to SAM 2’s ability to generalize to new and unseen visual domains. The model’s zero-shot generalization means it can segment any object in any video or image, making it highly versatile and adaptable to various use cases.

One of the most notable features of SAM 2 is its efficiency. It requires less interaction time, three times less than previous models, while achieving superior image and video segmentation accuracy. This efficiency is crucial for practical applications where time and precision are of the essence.

The potential applications of SAM 2 are vast and varied. For instance, in the creative industry, the model can generate new video effects, enhancing the capabilities of generative video models and unlocking new avenues for content creation. In data annotation, SAM 2 can expedite the labeling of visual data, thereby improving the training of future computer vision systems. This is particularly beneficial for industries relying on large datasets for training, such as autonomous vehicles and robotics.

SAM 2 holds promise in the scientific and medical fields. It can segment moving cells in microscopic videos, aiding research and diagnostic processes. The model’s ability to track objects in drone footage can assist in monitoring wildlife and conducting environmental studies.

In line with Meta’s commitment to open science, the SAM 2 project includes releasing the model’s code and weights under an Apache 2.0 license. This openness encourages collaboration & innovation within the AI community, allowing researchers and developers to explore new capabilities and applications of the model. Meta has released the SA-V dataset, a comprehensive collection of approximately 51,000 real-world videos and over 600,000 spatio-temporal masks, under a CC BY 4.0 license. This dataset is significantly larger than previous datasets, providing a rich resource for training and testing segmentation models.

The development of SAM 2 involved significant technical innovations. The model’s architecture builds on the foundation laid by SAM, extending its capabilities to handle video data. This involves a memory mechanism that enables the model to recall previously processed information and accurately segment objects across video frames. The memory encoder, memory bank, and memory attention module are critical components that allow SAM 2 to manage the complexities of video segmentation, such as object motion, deformation, and occlusion.

The SAM 2 team developed a promptable visual segmentation task to address the challenges posed by video data. This task allows the model to take input prompts in any video frame and predict a segmentation mask, which is then propagated across all frames to create a spatiotemporal mask. This iterative process ensures precise and refined segmentation results.

In conclusion, SAM 2 offers unparalleled real-time object segmentation capabilities in images and videos. Its versatility, efficiency, and open-source nature make it a valuable tool for many applications, from creative industries to scientific research. By sharing SAM 2 with the global AI community, Meta fosters innovation and collaboration, paving the way for future breakthroughs in computer vision technology.

"Up until today, annotating masklets in videos has been clunky; combining the first SAM model with other video object segmentation models. With SAM 2 annotating masklets will reach a whole new level. I consider the reported 8x speedup to be the lower bound of what is achievable with the right UX, and with +1M inferences with SAM on the Encord platform, we’ve seen the tremendous value that these types of models can provide to ML teams. " - Dr Frederik Hvilshøj - Head of ML at Encord

Check out the Paper, Download the Model, Dataset, and Try the demo here. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter..

Don’t Forget to join our 47k+ ML SubReddit

Find Upcoming AI Webinars here

The post Meta AI Introduces Meta Segment Anything Model 2 (SAM 2): The First Unified Model for Segmenting Objects Across Images and Videos appeared first on MarkTechPost.