Large language models (LLMs) have gained significant attention for their ability to store vast amounts of factual knowledge within their weights during pretraining. This capability has led to promising results in knowledge-intensive tasks, particularly factual question-answering. However, a critical challenge persists: LLMs often generate plausible but incorrect responses to queries, undermining their reliability. This inconsistency in factual accuracy poses a significant hurdle in the widespread adoption and trust of LLMs for knowledge-based applications. Researchers are grappling with the challenge of improving the factuality of LLM outputs while maintaining their versatility and generative capabilities. The problem is further complicated by the observation that even when LLMs possess the correct information, they may still produce inaccurate answers, suggesting underlying issues in knowledge retrieval and application.

Researchers have attempted various approaches to improve factuality in LLMs. Some studies focus on the impact of unfamiliar examples during fine-tuning, revealing that these can potentially worsen factuality due to overfitting. Other approaches examine the reliability of factual knowledge, showing LLMs often underperform on obscure information. Techniques to enhance factuality include manipulating attention mechanisms, using unsupervised internal probes, and developing methods for LLMs to abstain from answering uncertain questions. Some researchers have introduced fine-tuning techniques to encourage LLMs to refuse questions outside their knowledge boundaries. Also, studies have investigated LLM mechanisms and training dynamics, examining how facts are stored and extracted, and analyzing pretraining dynamics of syntax acquisition and attention patterns. Despite these efforts, challenges in achieving consistent factual accuracy persist.

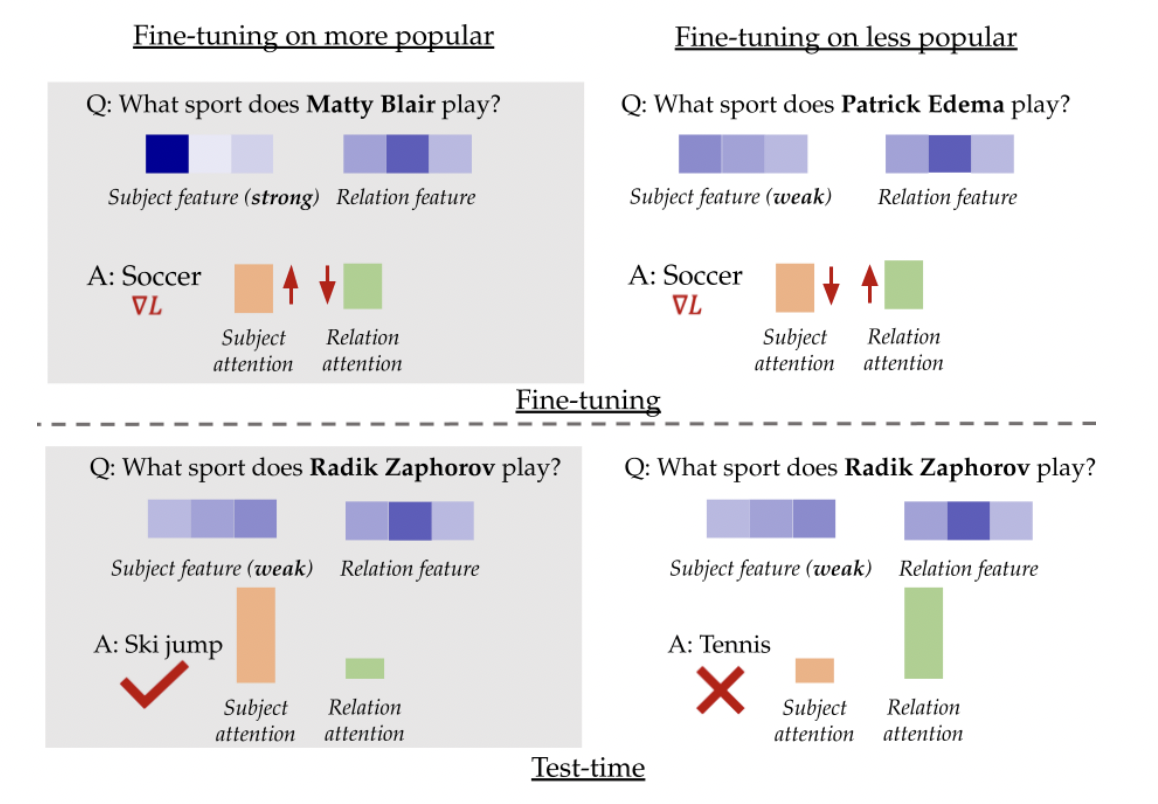

In this study, researchers from the Department of Machine Learning, at Carnegie Mellon University and the Department of Computer Science, at Stanford University found that the impact of fine-tuning examples on LLMs depends critically on how well the facts are encoded in the pre-trained model. Fine-tuning on well-encoded facts significantly improves factuality, while using less well-encoded facts can harm performance. This phenomenon occurs because LLMs can either use memorized knowledge or rely on general “shortcuts” to answer questions. The composition of fine-tuning data determines which mechanism is amplified. Well-known facts reinforce the use of memorized knowledge, while less familiar facts encourage shortcut usage. This insight provides a new perspective on improving LLM factuality through strategic selection of fine-tuning data.

The method utilizes a synthetic setup to study the impact of fine-tuning data on LLM factuality. This setup simulates a simplified token space for subjects, relations, and answers, with different formatting between pretraining and downstream tasks. Pretraining samples are drawn from a Zipf distribution for subjects and a uniform distribution for relations. Key findings reveal that fine-tuning popular facts significantly improves factuality, with effects amplified for less popular entities. The study examines the influence of the Zipf distribution parameter and pretraining steps on this phenomenon. These observations lead to the concept of “fact salience,” representing how well a model knows a fact, which influences fine-tuning behavior and downstream performance. This synthetic approach allows for a controlled investigation of pretraining processes that would be impractical with real large language models.

Experimental results across multiple datasets (PopQA, Entity-Questions, and MMLU) and models (Llama-7B and Mistral) consistently show that fine-tuning on less popular or less confident examples underperforms compared to using popular knowledge. This performance gap widens for less popular test points, supporting the hypothesis that less popular facts are more sensitive to fine-tuning choices. Surprisingly, even randomly selected subsets outperform fine-tuning on the least popular knowledge, suggesting that including some popular facts can mitigate the negative impact of less popular ones. Also, training on a smaller subset of the most popular facts often performs comparably or better than using the entire dataset. These findings indicate that careful selection of fine-tuning data, focusing on well-known facts, can lead to improved factual accuracy in LLMs, potentially allowing for more efficient and effective training processes.

The study provides significant insights into improving language model factuality through strategic QA dataset composition. Contrary to intuitive assumptions, finetuning on well-known facts consistently enhances overall factuality. This finding, observed across various settings and supported by a conceptual model, challenges conventional approaches to QA dataset design. The research opens new avenues for improving language model performance, suggesting potential benefits in regularization techniques to overcome attention imbalance, curriculum learning strategies, and the development of synthetic data for efficient knowledge extraction. These findings provide a foundation for future work aimed at enhancing the factual accuracy and reliability of language models in diverse applications.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter.

Join our Telegram Channel and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 46k+ ML SubReddit

The post Rethinking QA Dataset Design: How Popular Knowledge Enhances LLM Accuracy? appeared first on MarkTechPost.