In a significant leap forward for AI, Together AI has introduced an innovative Mixture of Agents (MoA) approach, Together MoA. This new model harnesses the collective strengths of multiple large language models (LLMs) to enhance state-of-the-art quality and performance, setting new benchmarks in AI.

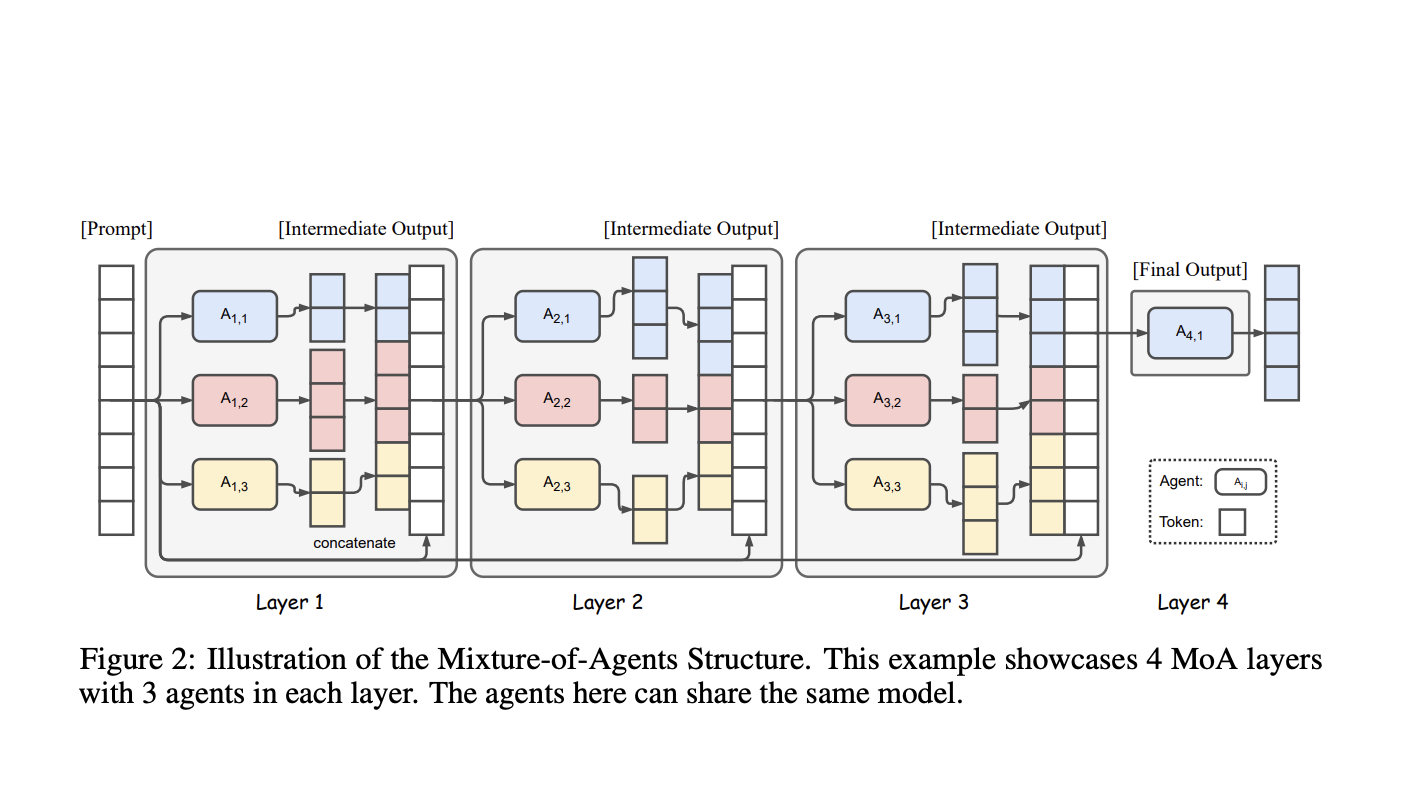

MoA employs a layered architecture, with each layer comprising several LLM agents. These agents utilize outputs from the previous layer as auxiliary information to generate refined responses. This method allows MoA to integrate diverse capabilities and insights from various models, resulting in a more robust and versatile combined model. The implementation has proven successful, achieving a remarkable score of 65.1% on the AlpacaEval 2.0 benchmark, surpassing the previous leader, GPT-4o, which scored 57.5%.

A critical insight driving the development of MoA is the concept of “collaborativeness” among LLMs. This phenomenon suggests that an LLM tends to generate better responses when presented with outputs from other models, even if those models are less capable. By leveraging this insight, MoA’s architecture categorizes models into “proposers” and “aggregators.” Proposers generate initial reference responses, offering nuanced and diverse perspectives, while aggregators synthesize these responses into high-quality outputs. This iterative process continues through several layers until a comprehensive and refined response is achieved.

The Together MoA framework has been rigorously tested on multiple benchmarks, including AlpacaEval 2.0, MT-Bench, and FLASK. The results are impressive, with Together MoA achieving top positions on the AlpacaEval 2.0 and MT-Bench leaderboards. Notably, on AlpacaEval 2.0, Together MoA achieved a 7.6% absolute improvement margin from 57.5% (GPT-4o) to 65.1% using only open-source models. This demonstrates the model’s superior performance compared to closed-source alternatives.

In addition to its technical success, Together MoA is designed with cost-effectiveness in mind. By analyzing the cost-performance trade-offs, the research indicates that the Together MoA configuration provides the best balance, offering high-quality results at a reasonable cost. This is particularly evident in the Together MoA-Lite configuration, which, despite having fewer layers, matches GPT-4o in cost while achieving superior quality.

MoA’s success is attributed to the collaborative efforts of several organizations in the open-source AI community, including Meta AI, Mistral AI, Microsoft, Alibaba Cloud, and DataBricks. Their contributions to developing models like Meta Llama 3, Mixtral, WizardLM, Qwen, and DBRX have been instrumental in this achievement. Additionally, benchmarks like AlpacaEval, MT-Bench, and FLASK, developed by Tatsu Labs, LMSYS, and KAIST AI, played a crucial role in evaluating MoA’s performance.

Looking ahead, Together AI plans to further optimize the MoA architecture by exploring various model choices, prompts, and configurations. One key area of focus will be reducing the latency of the time to the first token, which is an exciting future direction for this research. They aim to enhance MoA’s capabilities in reasoning-focused tasks, further solidifying its position as a leader in AI innovation.

In conclusion, Together MoA represents a significant advancement in leveraging the collective intelligence of open-source models. Its layered approach and collaborative ethos exemplify the potential for enhancing AI systems, making them more capable, robust, and aligned with human reasoning. The AI community eagerly anticipates this groundbreaking technology’s continued evolution and application.

Check out the Paper, GitHub, and Blog. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter.

Join our Telegram Channel and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 44k+ ML SubReddit

The post Together AI Introduces Mixture of Agents (MoA): An AI Framework that Leverages the Collective Strengths of Multiple LLMs to Improve State-of-the-Art Quality appeared first on MarkTechPost.