Software engineering has witnessed remarkable advancements with the development of Large Language Models (LLMs). These models, trained on extensive datasets, have demonstrated proficiency in various tasks, including code generation, translation, and optimization. LLMs are increasingly utilized for compiler optimization, a critical process that transforms source code to enhance performance and efficiency while maintaining functionality. However, traditional code optimization methods are often labor-intensive and require specialized knowledge of the target programming language and the underlying hardware architecture, posing significant challenges as software grows in complexity and scale.

The main issue in software development is achieving efficient code optimization across diverse hardware architectures. This complexity is compounded by the time-consuming nature of traditional optimization methods, which demand deep expertise. As software systems expand, achieving optimal performance becomes increasingly challenging, necessitating advanced tools and methodologies that can effectively handle the intricacies of modern codebases.

Approaches to code optimization have employed machine learning algorithms to guide the process. These methods involve representing code in various forms, such as graphs or numeric features, to facilitate understanding and optimization by the algorithms. However, these representations often need more critical details, leading to suboptimal performance. While LLMs like Code Llama and GPT-4 have been used for minor optimization tasks, they need specialized training for comprehensive compiler optimization, limiting their effectiveness in this domain.

Researchers at Meta AI have introduced the Meta Large Language Model Compiler (LLM Compiler), specifically designed for code optimization tasks. This innovative tool is built on Code Llama’s foundation and fine-tuned on an extensive dataset of 546 billion tokens of LLVM intermediate representations (IRs) and assembly code. The Meta AI team has aimed to address the specific needs of compiler optimization by leveraging this extensive training, making the model available under a bespoke commercial license to facilitate broad use by academic researchers and industry practitioners.

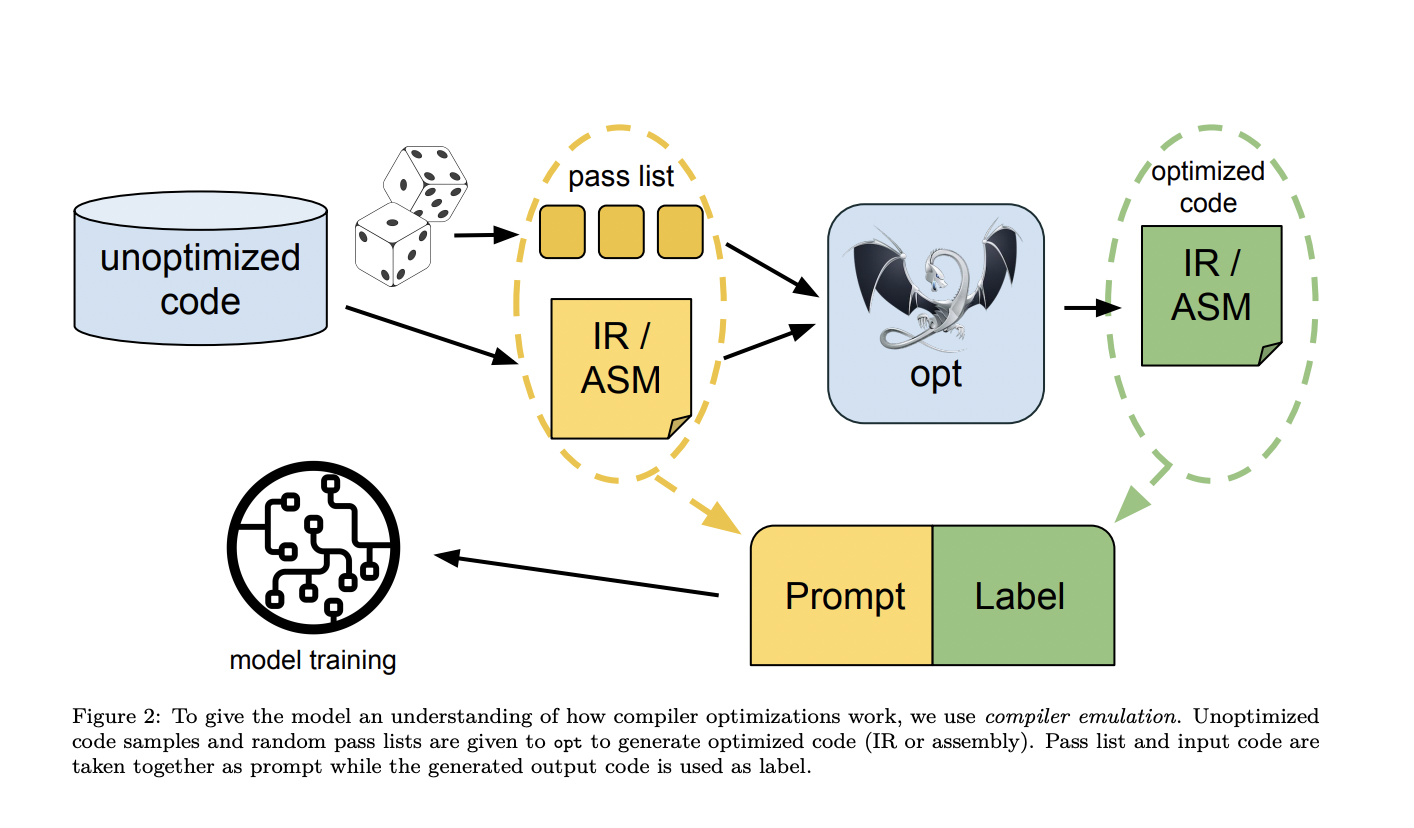

The LLM Compiler undergoes a robust pre-training process involving 546 billion tokens of compiler-centric data, followed by instruction fine-tuning 164 billion tokens for downstream tasks such as flag tuning and disassembly. The model is available in 7 billion and 13 billion parameters. This detailed training process enables the model to perform sophisticated code size optimization and accurately convert assembly code back into LLVM-IR. The training stages include understanding the input code, applying various optimization passes, and predicting the resulting optimized code and size. This multi-stage training pipeline ensures that the LLM Compiler is adept at handling complex optimization tasks efficiently.

The performance of the LLM Compiler achieves 77% of the optimizing potential of traditional autotuning methods without extensive compilations. The model attains a 45% round-trip disassembly rate in the disassembly task, with a 14% exact match accuracy. These results highlight the model’s effectiveness in producing optimized code and accurately reversing assembly to its intermediate representation. Compared to other models like Code Llama and GPT-4 Turbo, the LLM Compiler significantly outperforms them in specific tasks, demonstrating its advanced capabilities in compiler optimization.

Leveraging extensive training on compiler-specific data provides a scalable and cost-effective solution for academic researchers and industry practitioners. This innovation addresses the challenges of code optimization, offering an effective tool for enhancing software performance across various hardware platforms. The model’s availability in two sizes, coupled with its robust performance metrics, underscores its potential to revolutionize the approach to compiler optimization tasks.

In conclusion, the Meta LLM Compiler is a groundbreaking tool in code and compiler optimization. By building on the foundational capabilities of Code Llama and enhancing them with specialized training, the LLM Compiler addresses critical challenges in software development. Its ability to efficiently optimize code and impressive performance metrics make it a valuable asset for researchers and practitioners. This model simplifies the optimization process and sets a new benchmark for future advancements in the field.

Check out the Paper and HF Repo. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter.

Join our Telegram Channel and LinkedIn Group.

If you like our work, you will love our newsletter..

Don’t Forget to join our 45k+ ML SubReddit

The post Meta AI Introduces Meta LLM Compiler: A State-of-the-Art LLM that Builds upon Code Llama with Improved Performance for Code Optimization and Compiler Reasoning appeared first on MarkTechPost.